In this post, I will explain the problem that the sea turtle researchers at Osa Conservation asked me to solve. It turns out that they have a data problem that I am sure many other people and organizations have.

What is the problem?

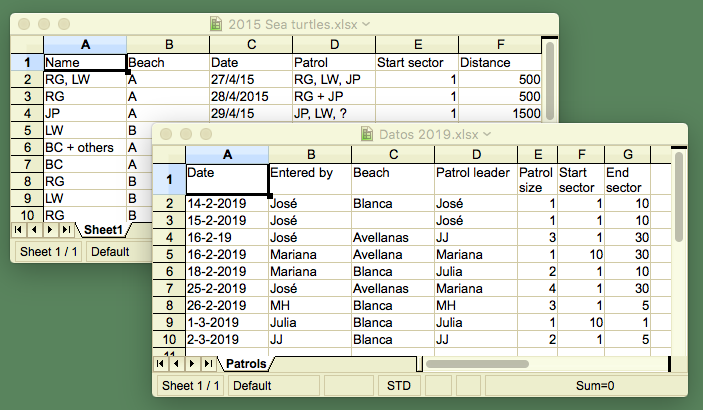

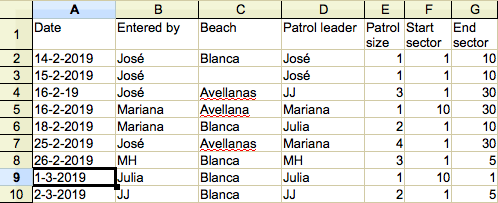

In short, the problem is that you may have multiple tables with data about the same thing, but differently structured, and then you want to bring it all together in one table.

The sting of the issue sits in the part ‘differently structured’. This could mean a number of things, including (but certainly not limited to) the following. Some are illustrated in the image above.

- It could mean that the order of the columns is different. Someone may have changed it because it was handier at some point.

- It could mean that the names of the columns do not match between different files anymore. For example, ‘date’ becomes ‘date of purchase’.

- It could mean that the format of the data differs. For programmers, a notorious case is how dates can be differently formatted.

- It could mean that even though the names of the columns are the same, the meaning is different. For example ‘date’ could mean the date of an event in one file, and date on which the data was entered in another. This one is particularly difficult to spot if you only have the data but no description ( no metadata ) of it.

- Another problem could be that the unit of what is measured changes. Say from meters to yards, from Deutschmark to Euro, and so on. Yes, it should be mentioned in the column header, but is it?

- Similarly, coding system may change. Instead of the first names of the researchers, acronyms may be used. Or instead of yes/no, it could be yes/no/maybe/no-data.

- Finally, it could be that data is entered outside the area where you would expect it, meaning in columns without a heading or in rows far below where a block of rows ends. This could be accidental scrap data or intentional additions of for example summary data.

Some of these problems can not be solved unless you have additional information. If the header of a column only says ‘Date’ and you need to know if that is, say, the date of departure or the date of arrival, the data itself is not likely to tell you. In the remainder of this text, those types of problems are ignored because also a database tool will not provide the answer. You will have to do historical research or interview someone, or so.

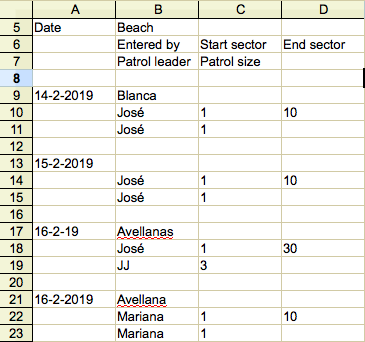

Another type of challenge that the database can not solve is that the table is not actually a table, but a spreadsheet designed as a repetition of forms. See the two images that explain the difference.

So, setting aside those types of issues, each of the remaining ones from the list is not that difficult to solve, but of course, if left unchecked changes may accumulate over time so that the first version of a file would not recognize the last. This is probably how you ended up with this problem.

Can this problem be solved manually?

How you would solve this if you only had manual labor, the spreadsheet software and the files? Well, it is possible, but it may not be very effective or efficient, as I will discuss in this section.

Each of the problems listed above has its own particular solution. For example, if the order of the columns is not the same, you need to reorder them (by dragging or copy-pasting) in a uniform way before copy-pasting them all into one file. If the formatting of a date differs between rows or tables, they need to be harmonized with the formatting and calculation functions that are available in the spreadsheet software. And so on. Every problem simply needs to be addressed one-by-one. In my view, with patience, systematic checking and conscientious work, this way, you can disentangle the biggest ‘data spaghetti’ imaginable.

It has a couple of downsides though. First of all you need to have the right mindset for this kind of work. Patience is not in everybody’s dictionary. Systematic working is also not always a given and precise working is also not easy. Basically, it is a tedious job.

Secondly, if the set of files, or the number of columns in them is big, in practice, it will take a lot of tedious work. Even if you have the right mindset for it, you will have problems keeping up the required level of focus in the face of work interruptions, potential bad nights of sleep, work pressure and so on. Did you indeed check the format of column BC in file 29? Or did you stop at BE before going for a lunch break?

Thirdly, the details of the manual labor go lost in history, unless you have made really good notes. If you are working in science, a reviewer may want to see those notes, so they need to be fool-proof readable. One may have to do individual data corrections, which are virtually impossible to trace. And what do you do if you realize later on that your corrections need to be corrected?

Arguably, much of the cloud-based software can keep track of changes, but for how long will they provide the service, and for how long will you keep the files in the cloud?

So, yes, in theory the problem can be solved manually, but in practice it may be a different story. Wouldn’t it be nice if there is some software tool? That is exactly what I am developing for the sear turtle researchers. I will write about it in another post.

A page-length version of both posts can also be found here on my website for future reference.

.

2 thoughts on “A common data problem”